•

7-minute read

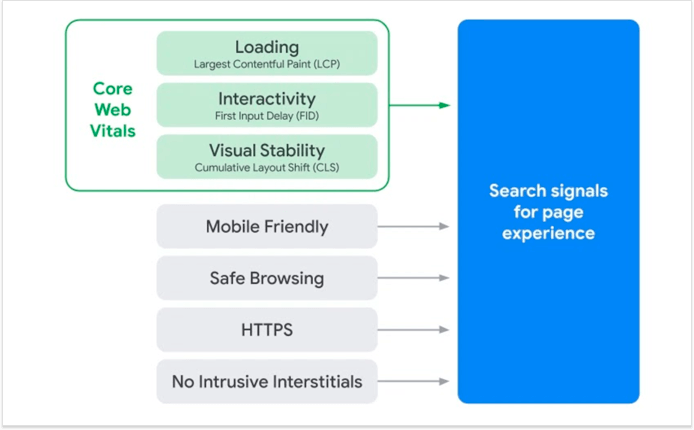

Google has recently named three user experience metrics to become new search ranking factors. The metrics are designed to measure loading speed, interactivity, and visual stability, and are known jointly as Core Web Vitals. Together with mobile-friendliness, safety, security, and the lack of pop-ups, these new signals will be used to assess overall page experience and to cast a final vote in deciding whether a page is worth ranking.

The new signals will not become a part of the algorithm until sometime next year, but some of the keener SEOs are already racing each other to get a perfect score. For the rest of us, it's probably too soon to be worrying about Core Web Vitals, but it wouldn't hurt to learn what they are and how important are they going to be.

Core Web Vitals are the result of a very long search for reliable user experience metrics. Over the years, Google has tested a number of metrics for measuring the perceived experience of interacting with a web page, and many metrics came close, but none quite hit the spot. Until now.

Google suggests that this new combination of three user experience metrics is finally able to quantify the first impression that the page makes on a user. For an added motivation, it claims that the websites that meet the benchmarks for a positive first impression are 24% less likely to lose users while loading pages.

Largest Contentful Paint evaluates loading performance and is reported using the following user experience benchmarks:

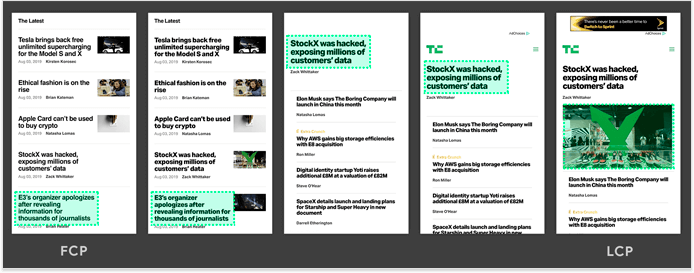

At the moment, LCP is Google's answer to how fast does the page feel. Before LCP, there was First Paint, First Contentful Paint, First Meaningful Paint, Time to Interactive, and First CPU Idle, and each of those metrics had its own limitations. Now, LCP is far from perfect, but right now it's the best measurement of when the user believes the page is loaded.

To calculate LCP, Google times the rendering of the largest content element (text/image/video) on the screen. As the composition of the screen changes during loading, Google switches to the new largest element. This goes on until either the page is fully loaded or the user begins interacting with the page:

There are many components that can influence load speed, but the main suggestions for improving LCP include faster server response times, faster resource loading, less render-blocking JavaScript and CSS, and improved client-side rendering.

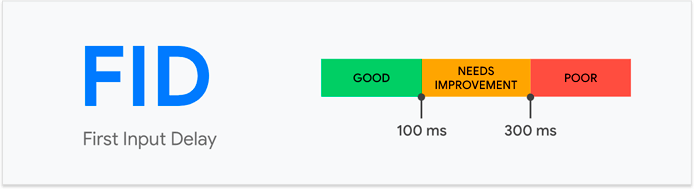

First Input Delay evaluates page responsiveness and is reported using the following user experience benchmarks:

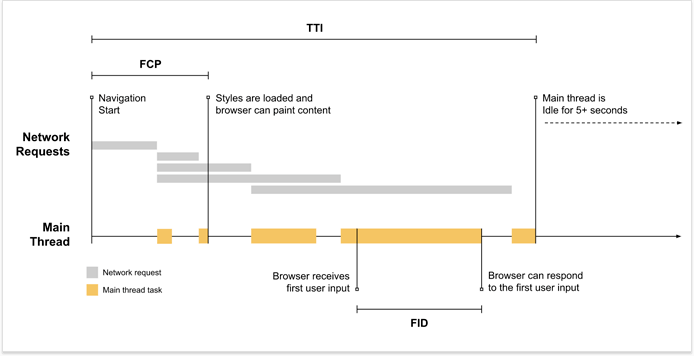

FID is the time it takes for a page to react to the user's first action (click, tap, or a keypress). Unlike the other two core vitals, FID can only be measured in-field as it requires an actual user to choose when to perform that first action. In the lab, FID is substituted for Total Blocking Time (TBT), which is the period between the first content appears and the page becomes responsive — it is correlated with FID but reports larger values.

Longer input delays tend to occur while the page is still loading, when some of the content is already visible, but not yet interactive because the browser is busy loading the rest of the page:

The main effort on optimizing FID is focused around faster page loading, namely code splitting and using less JavaScript.

Cumulative Layout Shift evaluates visual stability of a page and is reported using the following user experience benchmarks:

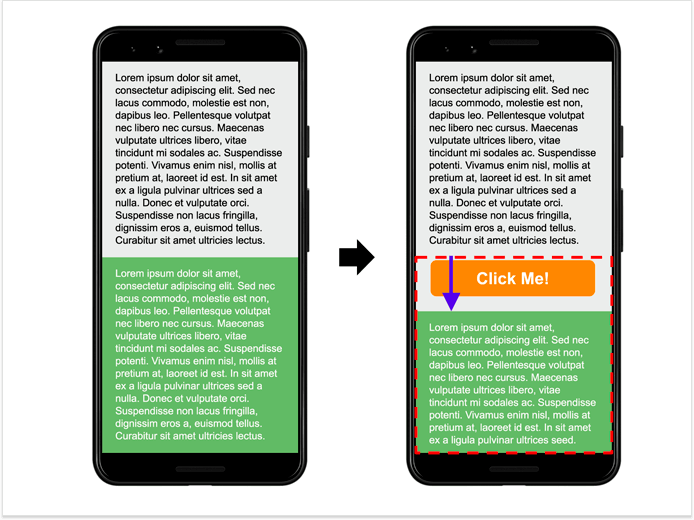

Layout shift, aka layout jank or content jank, is that thing when the content keeps moving about even though the page looks like it's fully loaded. Sometimes it's nothing more than annoying, and other times it may cause you to click the wrong thing, provoking even more unwanted changes to the page.

CLS score is calculated by multiplying the share of the screen that shifted unexpectedly when loading by the distance it traveled. In the example below, half of the screen has been affected by the shift, as indicated by the dotted rectangle. At the same time, the distance the content traveled is 15% of the screen, as indicated by the blue arrow. So, to calculate the CLS score, we multiply the affected area (0.5) by the distance traveled (0.15) and get a score of 0.075 — within the benchmark.

CLS optimization is the easiest of the Core Web Vitals. It boils down to including size attributes for your images and videos and never inserting new content above existing content.

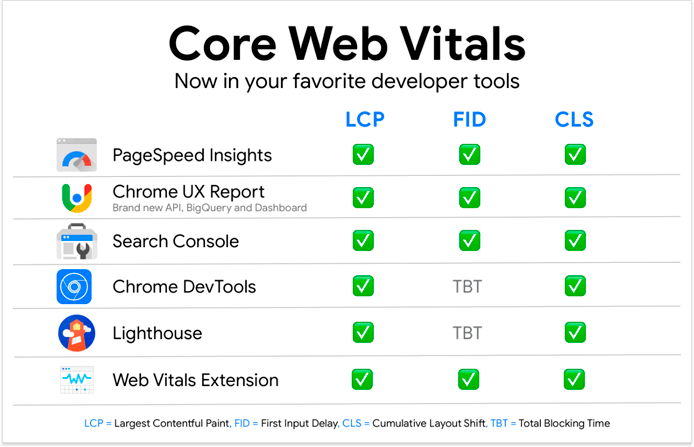

The vitals can be measured using any of these six tools for web developers: PageSpeed Insights, Chrome UX Report, Search Console, Chrome DevTools, Lighthouse, and Web Vitals Chrome Extension.

The main distinction between these tools is that some of them use field data from actual users, while others measure performance by simulating user behavior in a lab environment. Tools that use field data are a better choice since this is what Google is going to use as a ranking signal. Also, because FID can only be measured in-field, and cannot be reproduced in the lab. In the table above, you can tell the lab tools apart as they substitute FID for Total Blocking Time (TBT).

The tools also differ in their applications and required levels of technical proficiency. Search Console can be used as your Core Web Vitals dashboard, providing a bird's-eye view of your entire website, while DevTools and Lighthouse are better suited for drilling down and doing actual page optimization work. The Chrome Extension and PageSpeed Insights are best for making quick page assessments.

Google says it will use page experience signals as a tie-breaker, in case there are several pages with equally great, relevant content. So, while it's definitely another thing to worry about, optimizing for the core vitals should not be prioritized over things like quality content, search intent, and page authority.

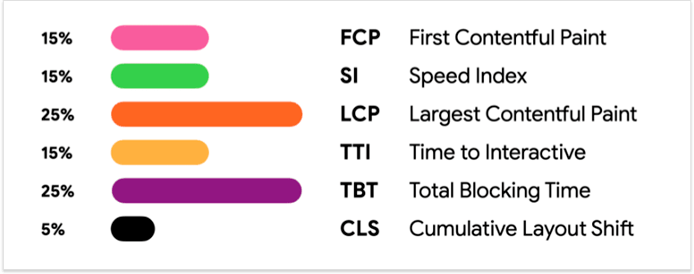

As to each individual Core Web Vital, we do get a hint about their weight relative to each other. All three vitals are used in the latest version of Lighthouse, where we can see its optimization score broken down by the weight of each component, where LCP is 25%, TBT (lab equivalent of FID) is 25%, and CLS is 5%. While they say the weights may be revised in later versions, it looks like CLS is currently much less important than the other two.

The introduction of the Core Web Vitals has established a new-ish branch of search optimization and it is yet unclear how the events will unfold, but here is what's been charted by Google so far:

Sometime in 2021, Core Web Vitals will become official ranking signals. Google has assured webmasters that they will be given a six months notice prior to these changes taking place.

Core Web Vitals will replace AMP as a qualifier for getting into Top Stories. Previously, Google was widely criticized for not extending AMP benefits (pre-rendering, top stories placement) to equally fast non-AMP pages, to which it replied that it can't because there is yet no reliable way to measure the perceived page speed of non-AMP pages. It seems like the Core Web Vitals will finally bridge this gap.

In the future, the Core Web Vitals may be expanded to include other user experience metrics. Some of the candidates currently include input delays for all interactions (not just the first one), smoothness (transitions/animations), and metrics that will enable AMP-like prerendering of pages in SERP.

Let me know if I'm wrong, but the introduction of the Core Web Vitals is good news. It's aimed at removing some of the most common UX pain points, which is definitely a step towards a more delightful web, as Google likes to put it. It's also a step towards increased transparency between Google and site owners — there isn't much we know for certain about the ranking algorithm, so the addition of more official factors is always welcome. And now that Google is on a path to collecting reliable UX metrics, perhaps there will be more ranking opportunities for those who are willing to go the extra mile.

By: Andrei Prakharevich