Today's SEO in 8 Trends According to Leading SEO Experts

8 trendy topics from SMX East conference

Late October 2018, New York City was happy to gather SEO&SEM people from all over the world. All of them were eager to connect and learn the current state of the industry.

I also attended the conference and took an SEO track. In case you missed something or couldn't come, I collected the most important things I learned to share here with you.

1. New Google Search Console: look at your site at unexpected angles

As you might remember, the new version of Google Search Console (GSC) has been available in beta since January. In September, it graduated out of beta with new features.

Fili called for a deep dive under the bonnet of new GSC, and we gladly accepted his invitation.

Cool new features of Google Search Console

(both in and out of beta):

- New reports:

| Index Coverage | Performance | Links | Manual actions |

|---|---|---|---|

| More detailed info where URLs are given 4 statuses (Errors, Valid, Valid with warnings, and Excluded) + more info on the reasons for these statuses. | Similar to Search Analytics report in the previous version, but with the expanded data range — 16 months instead of 3 months. | Consolidates the functionality of "Links to your site" and "Internal links" reports from the old GSC. | This report migrated into a separate section. |

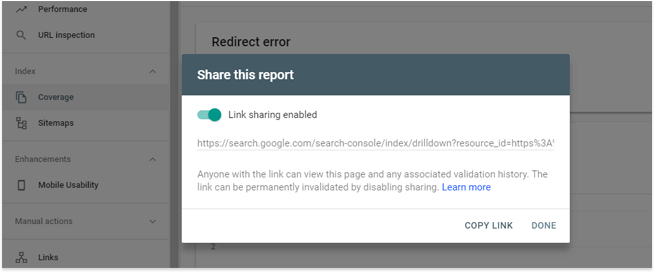

- Report Share function - now you can share a link to reports with anyone logged in Google. It's handy if you need to share some issues with, for example, developers who don't have access to the GSC property.

- Report filter function which got a lot subtler. Now it's possible to get an even more detailed insight into your data by applying rules (eguals/not equals, greater than/smaller than).

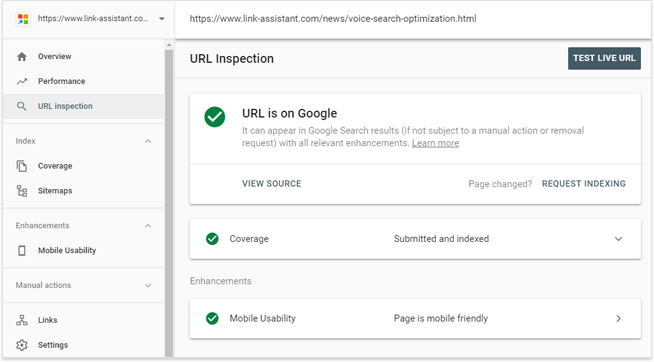

- Live URL tests - new inspection tool allows testing URLs in real time. Those tests are not based on the last indexed version of that URL, but how Google sees it this actual moment. Plus, if you had any issues, and they are fixed on the live version, you can validate your fix and immediately ask Google to recrawl the page.

2. Mobile-First Indexing: which mobile way to choose?

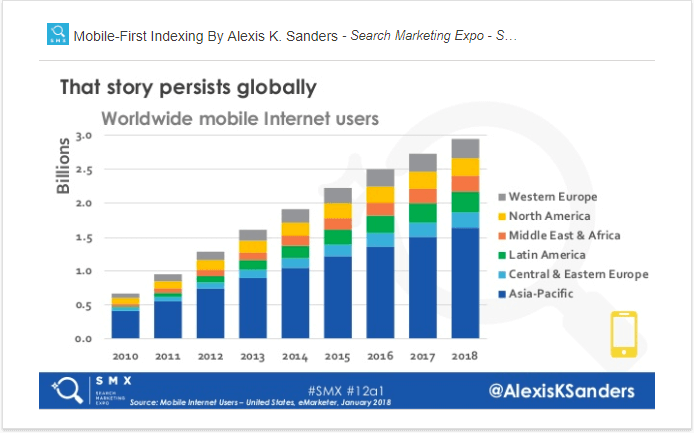

Once Google's started to migrate sites to mobile-first indexing, the process has gotten quite a buzz in the SEO community. Alexis explains it all:

"There is a worldwide shift towards mobile devices and portability of information, Google is following this trend."

Starting March'18, batches of sites have been migrating to mobile-first. What does it mean for you?

- Googlebot Smartphone will start crawling your site more comparing to regular Googlebot.

- There is still a single index of both desktop and mobile versions. Eventually, the algorithms will use just the mobile version for ranking.

- You cannot switch off the mobile-first indexing of your site once it's migrated. ?

- You will receive alerts from Google if there any mobile-first issues.

- There is no need to change XML sitemaps, backlinks (Google will use canonical clusters), canonical tags.

- The migration might be complete only in a few years.

| Fine | Not fine at all |

|---|---|

| Having just a desktop version | Shameless use of interstitials |

| Hamburger menus, accordion copies, breadcrumbs hidden on mobile | Use of flash |

Alexis asked the audience what kind of mobile experience they offer their users. I should say that most people indicated they use responsive design; while nobody, I think, raised the hand for m-dot or separate sites. Anyway, Ms. Sanders gave recommendations for any type of mobile site, as all of them are legitimate:

| Recommendations for mobile management | |||

|---|---|---|---|

| Responsive | Separate, Dynamic | AMP (when there are both AMP and non-AMP versions of a page) |

Desktop only |

| Monitor performance | Ensure parity between mobile and desktop (in terms of content, tagging, schema, internal links, etc.) | Follow separate URLs best practices | Check server log files |

| Monitor indexation status | Ensure servers have capacity to handle influx in crawl rate | Check server log files | Think of getting a mobile site experience |

| Check server log files | Validate robots.txt | ||

| Check server log files | |||

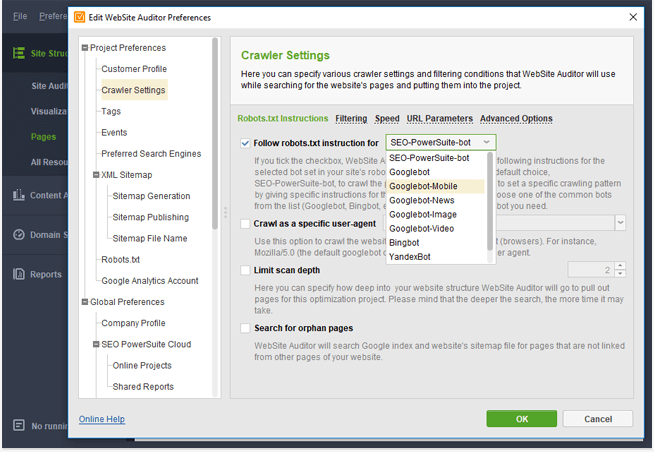

Crawl your site as a mobile bot to understand how search engines see your pages. You can do it in WebSite Auditor. Once you've created a project for your site in the tool, go to Preferences -> Crawler Settings and select Googlebot-Mobile:

After the crawl is complete, check all the sections to see how your site is performing on mobile.

3. Mobile Page Speed Update: Optimization beats Speed

We also had a special reason to attend SMX East in New York — our Founder and CMO Aleh Barysevich was giving a speech there. The speech was based on the recently launched Google Page Speed Update and our experiment tracking the real influence of the Update on organic rankings.

Here are the most important highlights of the speech:

- Google goes crazy speed-wise

While users expect mobile pages to load within 3 seconds, the average mobile webpage still takes 15.3 seconds to load. Trying to serve those demanding users, Google's become obsessed with page speed and, besides all the other efforts, it's announced mobile page speed a ranking factor.

- RAIL performance model

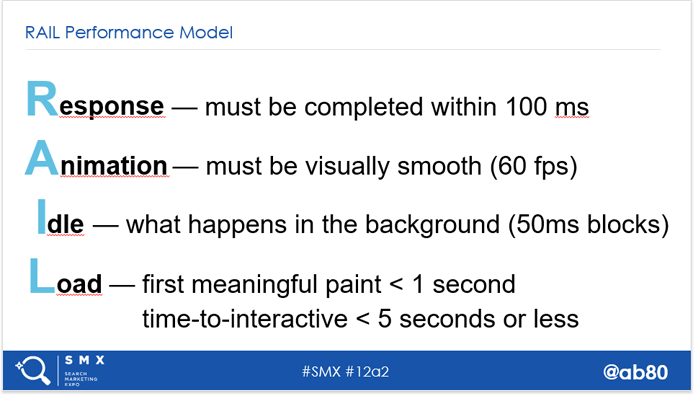

To build a fast site, web-developers and SEOs have to deal with dozens of tech- and business-related limitations. They also have to avoid all possible outdated SEO tactics that may do more harm than good. To cut this optimization chaos, Google suggested optimizing according to RAIL (Response, Animation, Idle tasks, Loading) model:

- Google uses field data, not lab data, for measuring speed

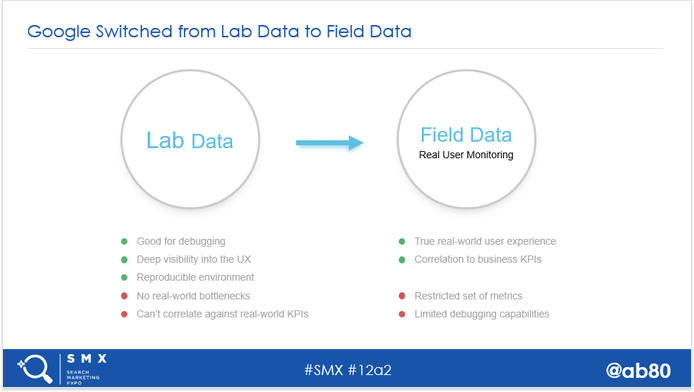

Once the Update was announced, Google PageSpeed Insights changed its interface and acquired a new section — Speed. Speed is measured according to two metrics: First Contentful Paint (FCP) and DOM Content Loaded (DCL).

The game-changing part here is how those metrics are measured. They are being collected from millions of real users opening websites in their Chrome browsers. So if the users have a slow internet connection, or slower devices, most probably Google will also see your website as "Slow". Thus, we may say that Google has fully switched from "lab data" to "field data", or 'Real 'User 'Monitoring.

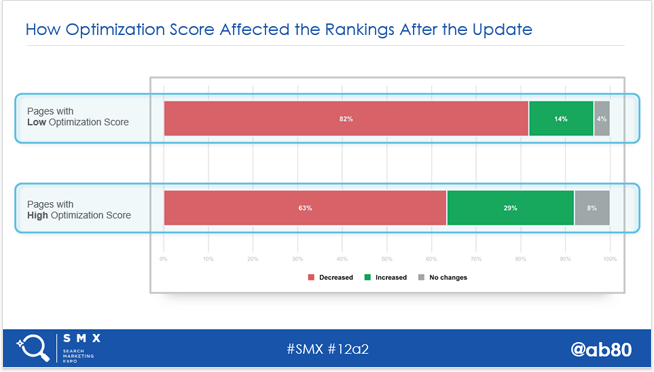

- Optimization score is now more important than Speed score (according to the experiment)

"Google doesn't fully rely on the loading speed metrics in its algorithm, and for SEO it's more important to have a high Optimization Score rather than a really high Speed Score. (At least for now.)"

SEO PowerSuite's team started its real-data experiment in May'18. We've been measuring Optimization score, FCP, and DCP for a million pages from top 30 positions for 33,000 keywords.

We've found out the following:

- Both before and after the Update, launched in July, the correlation between average Optimization score and ranking was pretty high — 0.97. At the same time, there was no correlation between Speed score metrics and rankings.

- Having compared the progress of the slowest and fastest pages separately, we found out that even if the site has a low Speed score in PageSpeed Insights, it still keeps its rankings. However, if the site is under-optimized, it gets a decrease in rankings. And vice versa.

So for now, it's safe to focus on technical optimization of your site. Just keep an eye on the industry news as well as the results of our ongoing experiment.

- Analyze Google's PageSpeed Insights rules.

- For more unique details, grab this ultimate guide to improving Optimization score.

- You can't change how real users see your site, but you can analyze your real audience using the same data as Google does. The Chrome User Experience Report (CrUX) is free to explore on Google BigQuery platform.

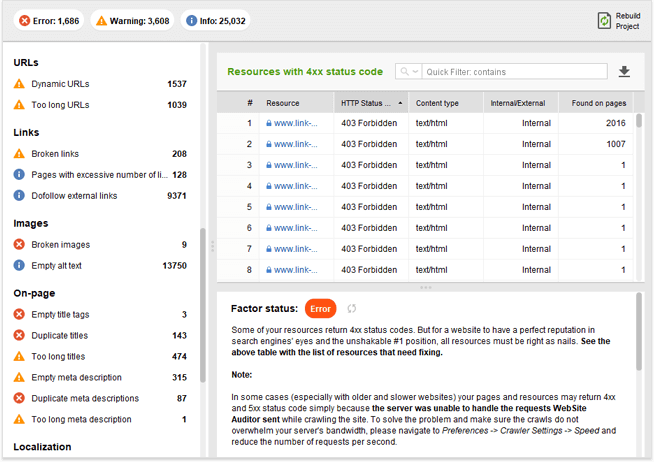

- Audit your site to see how well it's optimized. Run WebSite Auditor, create a project for your site and go straight to Site Structure > Site Audit. Analyze the audit results and fix all the Warning and Error issues.

4. JavaScript SEO: tips & tricks to do it right

When you hear the name of Bartosz Goralewicz, you should know that most probably he will talk about JavaScript.?That was also the case at the conference.

According to Bartosz's JS SEO timeline, it's a hot topic right now. It's seen a golden age in 2017, and today we live in JavaScript SEO hype.

"Is JavaScript evil? No, it's way worse, it is complex."

JavaScript SEO best practices

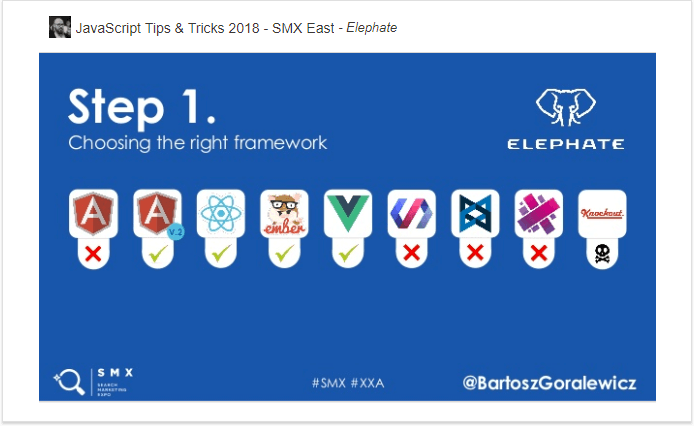

From the slide, I see that Bartosz vouches for Angular v2, React, Ember, and Vue frameworks. If you want to dig into which one is fully crawlable and indexable by Google, read Bartosz's experiment.

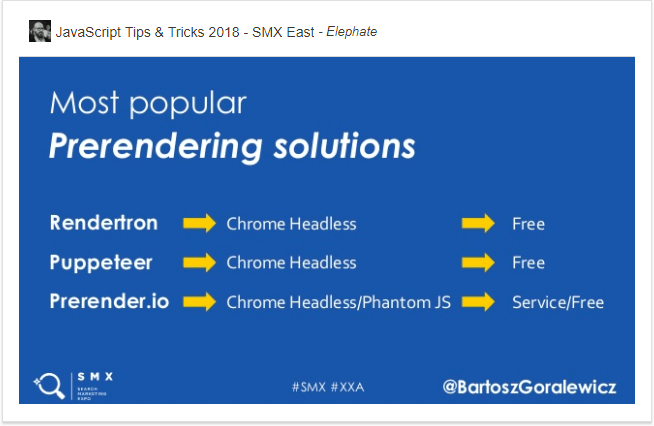

Bartosz strongly recommends prerendering in case your site uses client-side rendering. In this type of rendering, Googlebot or a browser has to process JavaScript to see the content, which can cause some issues with crawling and indexing.

If you use prerendering, you feed a browser or bot an HTML snapshot with no JavaScript. At the same time, users get the JS-rich version of your page. This way, you make sure that your pages are rendered properly.

Here are the alternatives to prerendering:

- Server-rendered content: all rendering work is done by your servers, and bots and browsers get a ready HTML.

- Isomorphic JavaScript: prerendered content is served to all users on the first request, while interactions are client-side.

- Hybrid rendering: prerendered HTML is sent to users and search engines. Then the server adds JS on top of that.

- Static sites: all the HTML files are built with data before being uploaded to a server. These are very fast and cheap to host, with increased security and version control.

- To learn more about JavaScript SEO, land here.

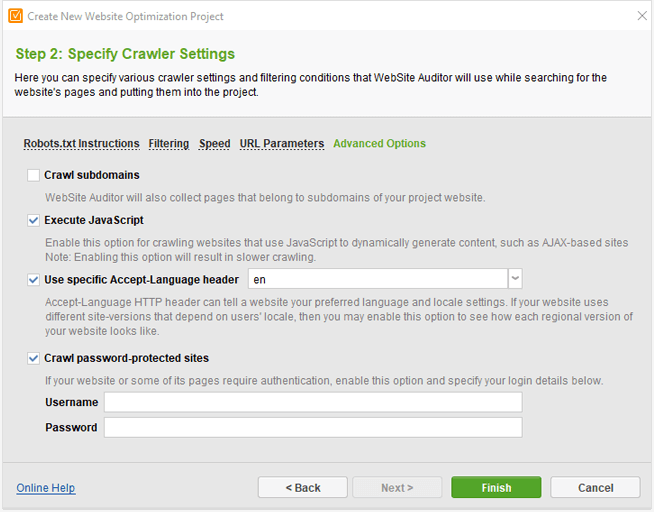

- To crawl your JavaScript-based site, use WebSite Auditor. When creating a project, check the Enable expert options box at Step 1, go to Advanced Options, and tick the Execute JavaScript box.

For an existing project, go to Preferences > Crawler Settings to do the same, and hit Rebuild Project.

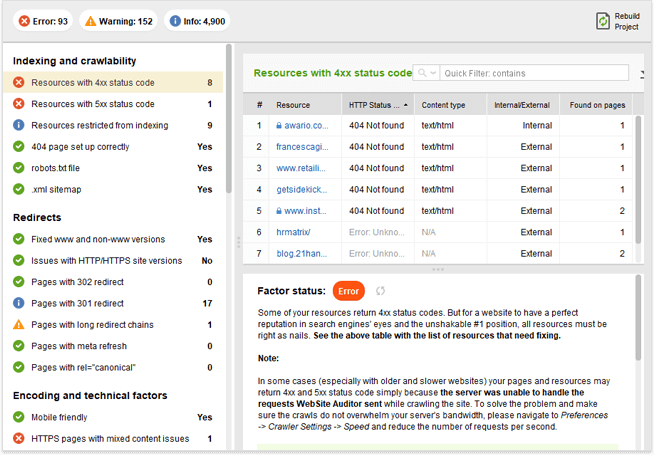

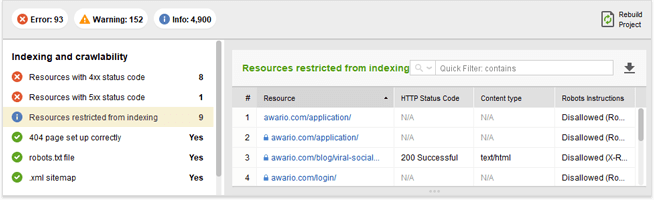

- To check whether your site has any JS resources blocked (which is considered a bad practice), go to WebSite Auditor. First of all, check Indexing and crawlability > Resources restricted from indexing in Site Audit.

Second, go through your robots.txt to detect blocked JS and edit it right in the tool.

5. Site migrations: no need to lose traffic

Any type of site migrations is usually quite painful in terms of application as well as traffic loss that follows. Taylor is sure that you don't have to lose traffic once the site has migrated. Let's see how to do that.

"This is your opportunity to make the site even better for search engines and users."

Based on Taylor's experience of 30+ site migrations, the most frequent reasons for site migrations failures are:

- SEO team involved late

- Bad planning

- Rushing the process just to meet a deadline

- Lack of access to tools/resources

- Poor testing, QA & feedback loop

- Migrating around a seasonal spike

- Slow to fix issues & bugs

Let's see how to build your migration strategy to avoid any kind of failure.

3 steps to strategic planning:

Basically, what you need to do at this step is estimate your site's current state and make sure it's healthy. Run a thorough tech audit and content analysis with the help of crawling tools and fix any problems found.

When you are more or less satisfied with the site's health, compile a list of your top pages, de-duplicate it, and place this list in a safe place — you will need it on the staging phase and after the migration.

One more document of no less importance — a redirect mapping file reflecting which URLs you've decided to change. A piece of friendly advice: try to retain as many URLs as possible.

Finally, benchmark keywords driving traffic, organic traffic, number of indexed pages, etc. Having this chunk of valuable data at your hand, you will see at once when anything goes wrong.

Here is where it gets interesting. Now you have to set up a test site. Once done, compare live crawl vs. test crawl. Then crawl multiple times as changes are being applied. Get that list with top URLs and crawl them to make sure all of them return a 301 status code. Plus, crawl your XML sitemaps for bad status codes, and make sure all important URLs are included.

Also, while on staging, grab the structured data and AMP code and validate it in Google Search Console — do not wait till launch to do it!

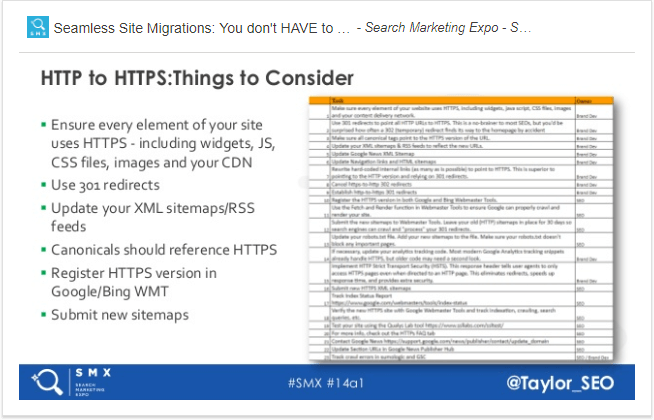

In case you migrate from HTTP to HTTPS, consider the following things:

Ta-daa! A scary and exciting day. While at this stage, follow this routine:

- Create a war room:

- Monitor server errors in Google Search Console;

- Compile a list of 404 errors, do some Excel magic to understand where most of them come from, and set additional redirects if needed;

- Monitor movements of key tech factors like status codes, canonical changes, sitemap errors, site performance, etc.;

- HTTP to HTTPS traffic: create a property set in Google Search Console to combine both sites in one view.

Tips & Lessons Learned

Well, while dealing with serious volumes of data and its management, it's easy to forget about no less important, but not so obvious things. Take into account Taylor's experience and:

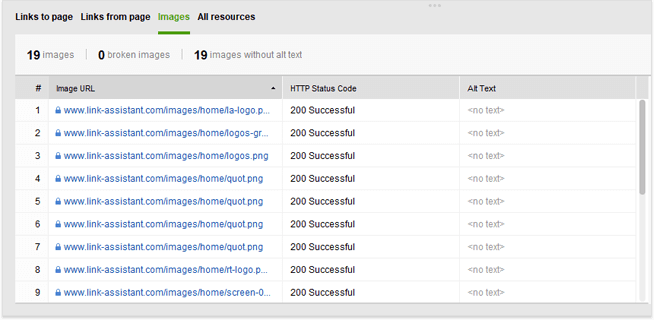

- Don't neglect image URLs: create an image sitemap to help Google find the path to them;

- Beware of redirect chains and loops: monitor them with the crawling tool, and fix, if any;

- Update internal links by linking them to the final destination;

- Make use of Slack bots, e.g., GSC alerts can come directly to your war-room team chat.

- You see, when migrating, you have to do a hell lot of crawling and auditing. In case you seek a tool that can crawl and audit your site from head to toe (all resources and sitemaps), try WebSite Auditor. You can use it both before and after the launch. Just make sure to save different versions of the project in order to compare the results.

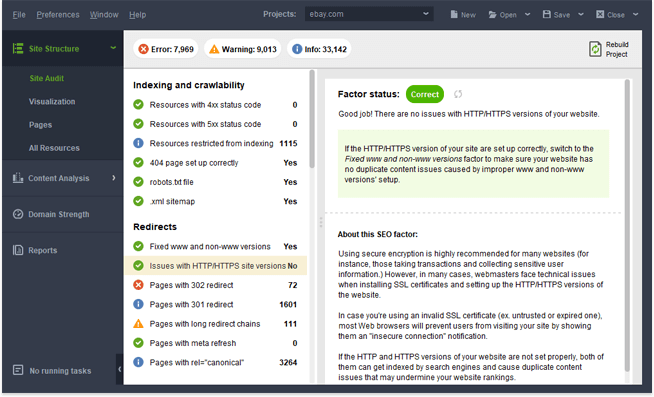

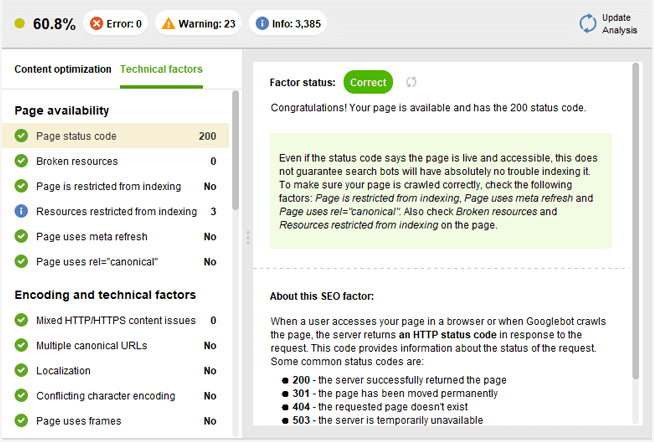

- Once you've created a project of your site in the tool, go to Site Structure > Site Audit. You can get there results of a complete site's audit as well as valuable recommendations on how to fix different issues.

- In the Content Analysis section, you can look at your content from the tech perspective, detect issues, and fix them. Plus, it's possible to edit your content right in the tool.

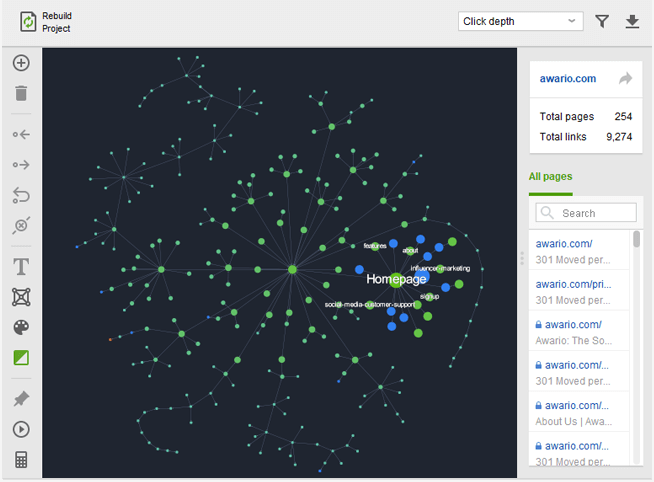

- In the Visualization section, you can get an interactive map of your internal site structure. You will immediately see whether you have long redirect chains, redirect loops, orphan pages, and non-working URLs.

- In the Pages section, analyze Images to make sure all of them have migrated.

- Plus, check the case study of our own site migration from https to https.

6. Complex SEO Problems: what to do when standard fixes don't apply

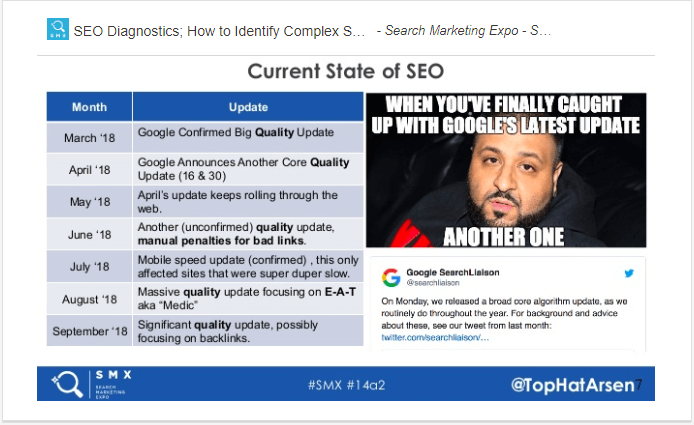

Arsen started with alarming stats on the current state of SEO aka the persistence of Google updates:

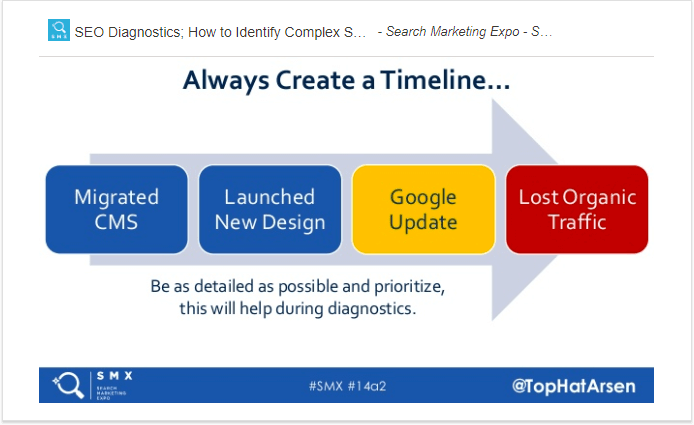

To get a grip on the current situation, Arsen prescribes some procedures in case your site behaves funny in search results:

1. SEO triage

In case you see that something happened to your site and you can't quite grasp what, try to sort out all the facts available to you:

- Define the symptoms (loss of traffic, positions, conversions, etc.), and when they started;

- Create a timeline of your recent actions that could have caused the symptoms:

- Match your symptoms against the timeline.

2. SEO diagnosis

The goal here is to rule out as many potential causes of the symptoms as possible.

- Check for manual actions in GSC (and search for your brand on Google);

- Check how volatile the algo is now;

- Check how the site handled previous updates (e.g., match historic ranking fluctuations with GA data);

- Check whether these might be normal fluctuations — seasonality, etc.

- Define whether the symptoms apply to a handful of pages or the entire website;

- Check whether it's just you by looking at your competitors;

- Check GSC for Performance, Indexing, URL inspection, etc.

- Manually check SERPs for changes;

- Check server logs and backlinks;

- Crawl and audit your site.

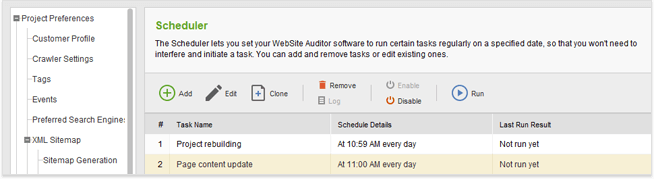

Overall, to escape such nervous situations, try to always be in a precautious state. Regularly monitor your money pages and set up recurring crawls.

SEO PowerSuite can easily help you to regularly check everything you can imagine: site audits, rank fluctuations, backlinks, etc. What's more, you can auto-schedule tasks in any SEO PowerSuite tool. This way, you can get regular reports on your site's current state:

7. Content Sceptics Age: how to make content linkworthy

Everybody knows it's hard to get links to your site. To do that according to Alli, you must create linkworthy content. How to do that?

Understand who your Persona is:

- Who comes to your site: age, gender, language, location;

- Why they come: motivation and intent.

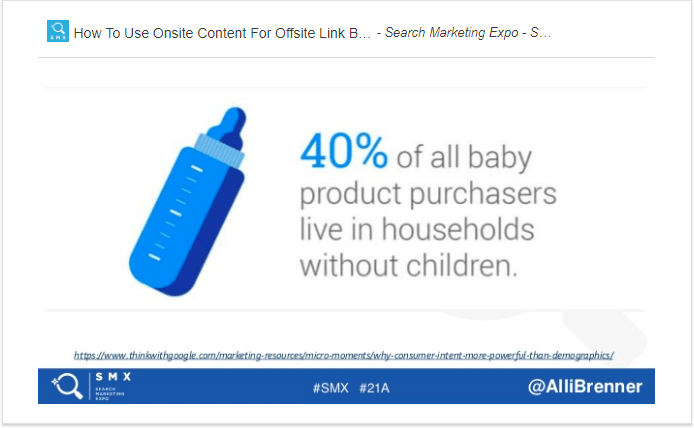

Simple example of the importance of understanding visitors' intent:

Thus, sites that target parents miss out on those 40% of uncles/aunts, grandparents, people invited to baby showers, etc.

Lisa was busting the myth of linkability of all well-written content.

"Content is linkable when it provides value and sparks emotion."

So, how to create linkable content according to Lisa?

Choose a linkable content type:

- Evergreen content — the one of value and no age: how-tos, tips, ultimate guides, FAQs, infographics, you've got the idea!

- Videos that are trust-building, easy-to-consume, preferable over text. For example, YouTube How-To video searches went up 70% since 2015.

- Data. You are swimming in data, just go mine it: search data, ad serving data, CRM data, sales data, etc.

+ Conduct your own research. It takes lots of time, but it's one of the best ways to secure links.

In case you crave for more details on this topic, refer to this guide on writing linkworthy content.

8. Voice Search&Virtual Assistants: how to rank for voice

Only the lazy ones haven't mentioned lately that the use of voice technology has been on the rise for some time. What about today? The stigma of using voice is declining fast: people are ok to speak to their phones in restrooms and theaters.

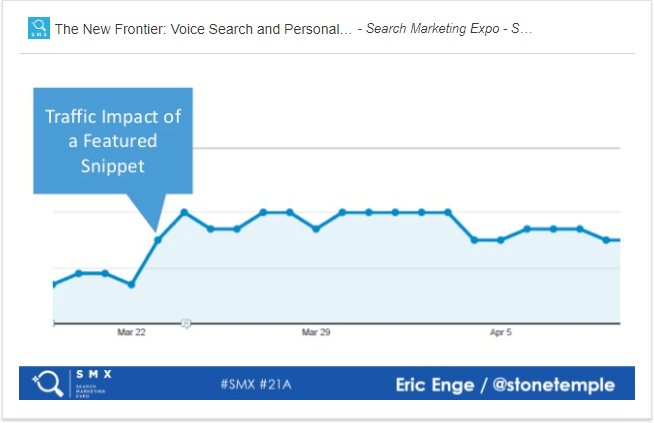

It's clear that in such situations, people are happy to get this one right answer, which we usually see on top of all results. Yes, the talk is about featured snippets, which are quite interesting in terms of voice search. Why?

- Featured snippets are good for traffic.

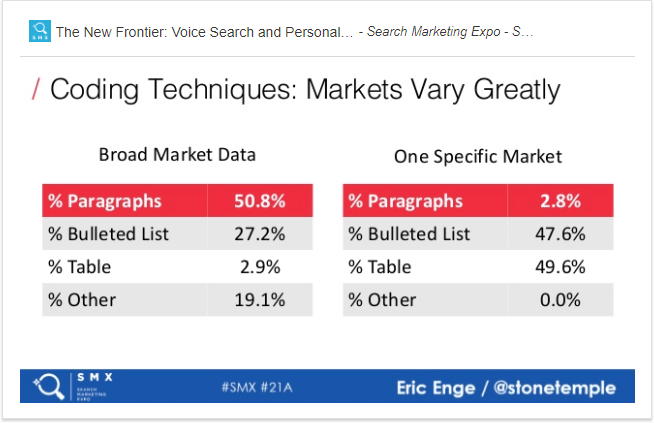

- Featured snippets are pulled to SERPs by a number of factors. According to the findings of the Stone Temple's study, featured snippets mostly come from:

— Sites with a high domain authority — 68,1%;

— Mostly blog posts;

— Mostly top 5 positions;

— Successful coding techniques (depending on the market):

However, voice search is not the only voice technology that people use to get help online. Voice assistants are also on the rise. Their presence in people's lives is very comprehensive, as personal assistant is a cloud technology. So, basically, in the Internet of Things, we have one assistant available everywhere that knows how to satisfy your every need: scheduling, entertainment, home control, etc.

Another interesting side of this technology is our tendency to treat voice assistants as real people. Thus, a "gender" of the voice assistant can influence our perception of information. According to the research, people tend to identify more with the machine voices of their own gender. What's more, we also attach personalities to the machine voices.

- Take part in developing personal assistant technologies by building Google Actions or Alexa Skills.

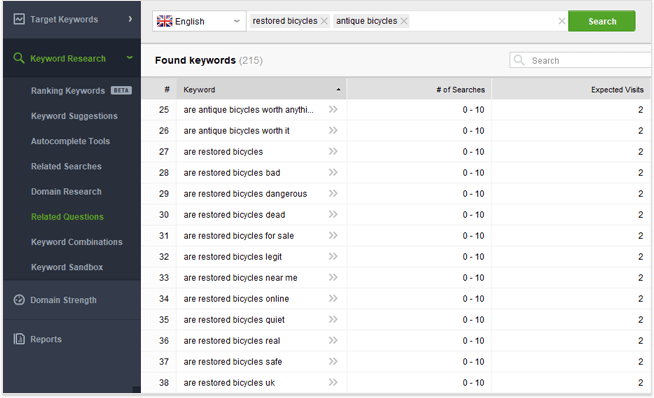

- Content snipped for featured snippets is usually built around questions. Rank Tracker has a keyword research tool developed exactly for pulling all possible questions with your target keywords.

Create a project for your site in the tool, go to Keyword Research > Related Questions. Paste in your keywords and hit Search. Your dashboard will populate with all possible questions:

- Dive deep into this guide on how to optimize for voice search.

Purna gave a great presentation on optimizing customer experience for voice search, chatbots, and digital assistants.

"For people to want to use conversational interfaces, the customer experience must be better than any other existing options."

People often get frustrated and quit voice assistants when their experience is not good and they cannot accomplish the intended action. To prevent such situations, you have to do the following.

- Master the science of customer experience:

- Laziness is entrenched in a human nature. Out of several ways of achieving the same goal, people will go for the least demanding course of action.

- There are two systems that govern out decision-making: Unthinking (fast, emotional, intuitive) and Thinking (logical, deliberate, slower). Being a business, you would like your customers to stay in the Unthinking stage.

- Soak in two important proofs of the future being conversational:

- By 2021, brands that redesign their websites to support visual and voice search will increase digital commerce revenue by 30%;

- The voice shopping market is expected to grow to $40B by 2022.

- Apply 4 C's of customer experience:

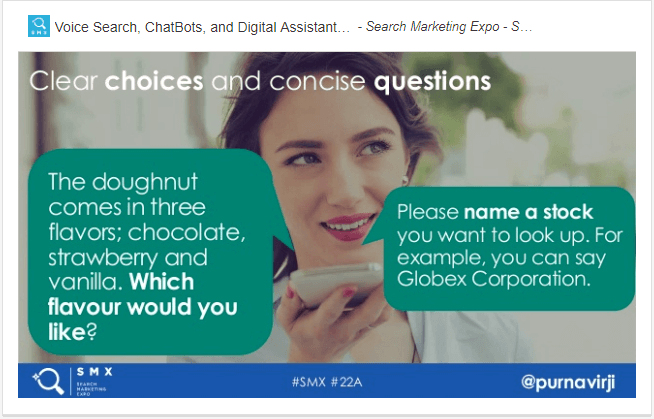

- Clarity — one of the best ways to do that is to provide clear user choices. You should do it in a way the user wouldn't need to go elsewhere to understand which choice to make.

Tip: Write sample dialogs and roleplaying interactions.

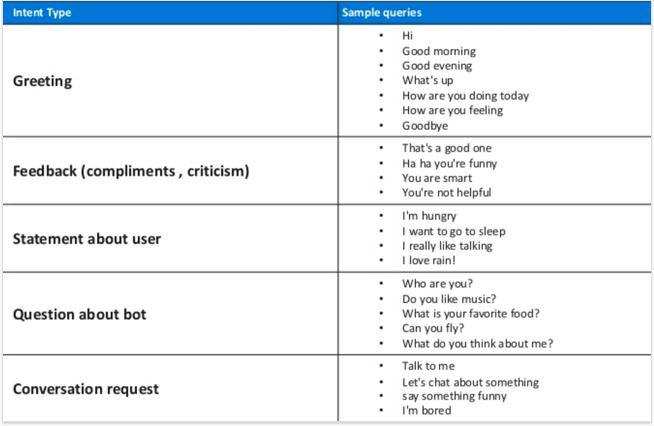

- Character — people prefer a virtual agent with an easy-to-perceive personality. However, the persona attached to your bot should depend on its purpose. Or your bot can have a few personas:

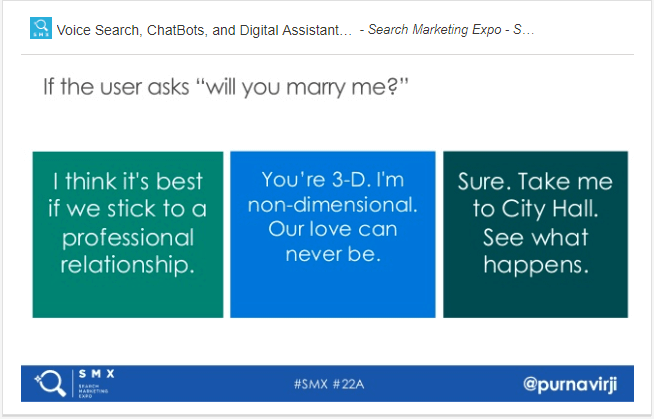

- Compassion — make bots better understand and resonate with humans. For example, people like small-talking, and it's usually a struggle for bots. Thus, build small talk scenarios to avoid the "Sorry I don't understand."

- Correction — explore the ways to correct an error without having to say sorry; for example, offer alternatives instead.

- Test the conversation:

Test logic -> Code&Debug -> Beta test -> A/B testing

These are my top insights from SMX East. This is me full of those insights:

Have you attended the conference? What do you think about the current state of industry? Let's discuss it in the comments!

By: Valerie Niechai