52203

•

25-minute read

Did you know that 76% of people who search for something nearby visit a business within a day?

In 2025, capturing these local searchers on Google can make or break a business. As consumers increasingly rely on “near me” searches and mobile devices to find services, Google has become the most powerful platform for reaching local customers exactly when they’re ready to buy.

In this guide, I’ll break down how to build a Local SEO strategy from scratch with clear, actionable steps for boosting your visibility in 2025. Plus, I’ll share some brilliant hacks on how ChatGPT can help with your Local SEO efforts.

But first, let me walk you through the very basics.

Local SEO is the process of improving your business’s visibility in local search results.

It’s a crucial strategy for businesses that want to reach nearby customers, as it helps your business appear prominently on Google Search and Google Maps when people look for services you offer.

For example, if you search for coffee shop near me, Google will return a local pack like this:

The main tasks involved in Local SEO include finding the right local keywords that match what customers are searching for, optimizing your Google Business Profile, and ensuring consistent NAP information across directories and platforms.

In Local SEO, NAP stands for Name, Address, and Phone number. These are the core details that identify a business online. NAP consistency means making sure your business’s name, address, and phone number are the same everywhere they appear on the web—like on your website, Google Business Profile, social media, and local directories.

Together, these steps help Google understand where your business is located and what it offers, making it more likely to appear in local search results.

Local SEO is increasingly critical in 2025 as search behavior continues to prioritize local, mobile, and immediate results.

Here are the main reasons Local SEO is crucial this year:

Searches for terms like “near me” have exploded in recent years, and they’re primarily driven by mobile users looking for services and products nearby.

In fact, 76% of people who search for something nearby on their smartphone visit a business within a day. This shift to mobile-first, location-based search means that businesses optimized for Local SEO are well-positioned to capture this ready-to-buy audience.

Google’s Local Pack—the set of three local business listings that appear at the top of search results—has become a highly valuable piece of online real estate.

Appearing in the Local Pack can greatly increase your business’s visibility, as it is prominently displayed above the standard organic search results and provides essential information like location, hours, and reviews.

With Google Maps integrated into the Local Pack, businesses with strong Local SEO are also more likely to appear on Google Maps, which further improves local discoverability.

Local searches tend to have higher conversion rates compared to broader search queries.

When someone searches for emergency plumber near me or restaurant in [city], they’re typically ready to take immediate action.

According to Google's data, 28% of local searches lead to a purchase. By focusing on Local SEO, businesses can attract highly motivated customers who are likely to convert, whether that’s by visiting a store, making a reservation, or calling to book a service.

As more businesses realize the value of Local SEO, competition for visibility in local search results has intensified.

To stand out, having a Google Business Profile is no longer enough—businesses must now optimize for reviews, keep NAP details consistent, and regularly post location-relevant content.

With a well-rounded Local SEO strategy, businesses can improve their local rankings and gain an advantage over competitors who are less optimized.

Customer reviews are now more influential than ever in Local SEO. Google’s algorithm increasingly relies on reviews as a signal of business credibility and popularity.

Businesses with a higher volume of positive reviews are more likely to rank well, especially in competitive markets. Additionally, reviews help build customer trust, and 88% of consumers say they trust online reviews as much as personal recommendations.

By encouraging and managing reviews as part of Local SEO, businesses can enhance both their rankings and their reputation.

Artificial Intelligence is increasingly valuable in Local SEO, helping businesses make data-driven decisions and automate key processes. In 2025, AI supports Local SEO by:

Integrating AI into Local SEO allows businesses to stay competitive, act quickly on trends, and better serve local customers.

Local SEO works by helping Google understand two key things about your business: where it’s located and what it offers.

This is done through optimized local listings, relevant keywords, consistent business information, and positive customer reviews. When these elements are aligned, Google is more likely to rank your business higher for local searches.

Local SEO is split into two main areas because Google displays two types of results for local searches: Local Pack results and organic search results. Your business can appear in both, which helps increase visibility to local searchers.

The Local Pack, often found at the top of Google’s search results for local queries, showcases the top three local businesses related to the search term.

This section includes each business’s name, location on a map, hours, contact information, and customer ratings. Local Pack results are especially valuable because they appear prominently at the top of the search engine results page, giving users quick access to relevant business details without further clicks.

Below the Local Pack, Google displays traditional organic search results, often referred to as the “ten blue links.” These results lead directly to websites rather than Google listings, and they follow Google’s standard ranking criteria.

For local businesses, ranking in these organic results can complement a Local Pack presence, offering another way to capture attention from searchers looking for services in the area.

For ranking businesses in Google Maps and the Local Pack, Google uses three main criteria:

For traditional organic search results (like regular website listings under the Local Pack), Google considers other factors alongside relevance and location, such as:

By optimizing for these factors, Local SEO improves your visibility in both the Local Pack and standard search results, helping potential customers find and choose your business.

Starting with Local SEO doesn’t have to be complicated, but it does require a structured approach to ensure your business is properly optimized for Google’s local search results.

Here’s a step-by-step guide on how to do Local SEO:

Google Business Profile is the ultimate business directory. When you create a profile here, all the information you enter feeds directly into Google’s index, making your business eligible for that coveted Local Pack placement on search results pages.

In fact, 36% of SEOs consider Google Business Profile to be the single most important factor for ranking in the map pack and 6% say it impacts regular organic results, according to a survey by BrightLocal. And for good reason: a Google Business Profile is almost required if you’re aiming to rank well in local searches.

To create your profile, go to the GBP create page and follow the setup instructions.

The setup instructions are straightforward, but there’s an important SEO consideration: thoughtfully add keywords to your business name. This can have a noticeable impact on your local rankings—similar to how keywords in titles and H1s work in content SEO.

For example, if you run a cafe and your legal business name doesn’t mention “cafe” or “coffee,” adding one of these terms to your name on your Google Business Profile can be beneficial. So, instead of “Beans & Dreams,” you might want to go with “Beans & Dreams Coffee House.”

However, it’s essential to follow Google’s business naming guidelines to avoid potential issues down the line.

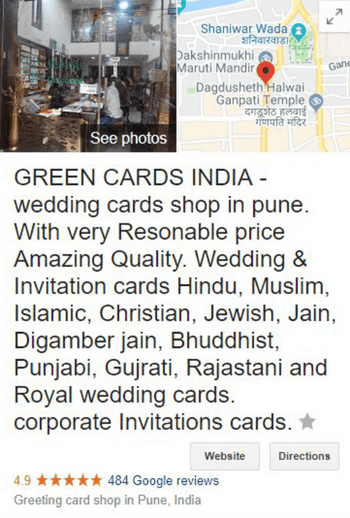

Keyword stuffing can lead to penalties or verification problems with Google, and excessive keywords may eventually need to be removed. Here’s an example of a business name overloaded with keywords—something to steer clear of if you want a healthy GBP strategy:

To get the most from your profile, Google suggests a few key practices:

Optimizing your profile with these practices can help build trust and visibility, setting your business up for success in local search.

Local keyword research is about identifying how potential customers search for the services you offer in a specific area. By optimizing your website for these terms, you increase the likelihood of appearing in local searches and attracting relevant traffic.

Here’s how to get started:

Begin by listing out the services you provide, as people often search in different ways for the same type of service.

For example, if you’re a florist, some customers might simply search for florist or flower shop, while others may be more specific, looking for terms like wedding flowers or same-day flower delivery. By mapping out each service as a keyword, you can cover a range of search intents and improve your visibility.

Here’s a potential list for a florist:

Once you have a base list, expand it by using each service keyword as a “seed” in a keyword research tool.

I will use Rank Tracker here as it offers more than 20 keyword research tools.

For starters, I’m going to check keyword suggestions from the Keyword Planner module:

Then, I will enrich my keyword list with the help of Autocomplete Tools. Here, I will add Los Angeles to my seed keyword so that the tool collects keywords only relevant to my specific location.

If you want to cover all relevant keywords, try also analyzing a competitor’s website. This can be done via the Domain Analysis module in Rank Tracker.

Simply enter the URL of any of your competitors and scroll the report down to the section called Top Organic Keywords.

Click See all to reveal all service-related keywords your competitor ranks for that might be useful for your own site.

Check out this quick tutorial to learn more about all keyword research tools found in Rank Tracker.

Many keyword research tools display national search volumes, but for a more specific breakdown, try Google Keyword Planner and set it to your area.

To get a general sense of keyword priority, you can still rely on national search data. For instance, if wedding flowers has higher search volume than event floral arrangements nationally, it’s likely a similar pattern exists locally. This can guide you in emphasizing certain services on your website based on demand.

Not every keyword is a good fit for Local SEO, so it’s essential to check if there’s local intent behind each term.

To do this, you can go two ways.

The first one is manual and quite time-consuming. You have to search the keyword in Google and check the results. If you see a Local Pack (the map and three business listings) or other local results, Google recognizes it as a local search query. This makes it a strong candidate for your Local SEO strategy.

The second option is to use Rank Tracker to quickly check all keywords for local SERP features.

You can also narrow down the analysis and see if the specific keywords trigger local pack results in your area. You can go as granular as a certain street address on the map.

Your homepage won’t rank for every keyword, so it’s essential to assign keywords to the most relevant pages.

Separate keywords by service type to make each page’s focus clear. For example, if you’re targeting wedding flowers and sympathy flowers, create individual pages for each service to improve relevance and avoid keyword overlap.

However, if the keywords are variations of the same service, like bouquet delivery and same-day flower delivery, you can group them on a single page.

This approach ensures that search engines can easily match each page to specific search terms, improving your site’s relevance and structure.

To group keywords by topics, you can use Rank Tracker. With the help of its intuitive in-built keyword grouper, you’ll be able to finish this task lightning fast.

After grouping keywords, you can easily assign them to corresponding landing pages using the Keyword Map module.

While your Google Business Profile is essential for reaching local customers, your website can also play a powerful role in local SEO. By localizing your site content, you increase visibility in local searches and make it easier for potential customers in your area to find you.

A simple way to localize your content is by naturally incorporating your city, county, or region name throughout your site.

For example, if you’re a florist in Los Angeles, you might include phrases like Los Angeles wedding flowers or Downtown Los Angeles bouquet delivery in your service descriptions. Adding location-based terms in titles, headers, and content reinforces your relevance to local searches and helps you appear in more high-value results.

Another effective strategy is to get involved in local events and showcase these activities on your website. If your business sponsors a local festival, offers services at a community fair, or participates in charity events, create a post about it.

For instance, a bakery might share an article about their participation in a Boulder Farmers Market or a Los Angeles Fall Festival with photos and a summary of their contributions. When you write about these events, you can integrate locally relevant keywords, like Boulder farmers market treats or Los Angeles festival catering, to increase visibility for local searchers.

A well-executed localization strategy not only drives local traffic to your site but also helps convert that traffic into qualified leads within your service area.

Once your business is listed on Google Business Profile and other relevant directories, you’ll likely start receiving reviews from clients. Both the quantity and quality of these reviews directly influence your position in the Local Pack, visibility on Google Maps, and, to a lesser degree, your organic rankings.

In fact, according to a 2021 study by BrightLocal, 17% of SEOs consider reviews to be the top factor for Local Pack rankings. Some studies suggest that reviews are the second most important ranking factor in the local pack.

To increase the number of reviews you receive, consider actively encouraging satisfied clients to leave feedback. This could be as simple as adding a “Review Us” banner on your website or including a reminder in follow-up emails.

You could also attach a review card to your deliveries or ask customers directly at checkout.

For a more proactive approach, some businesses offer small incentives or bonuses to clients who take the time to review their services. However, be cautious—offering or accepting money in exchange for reviews is against Google’s guidelines.

When it comes to building a strong rating, managing negative reviews is just as important as getting positive ones. If you receive a complaint, here are a few best practices to improve the situation:

Finally, make it a habit to respond to all reviews—positive and negative. Not only does this show your commitment to customer satisfaction, but it also builds trust with both Google and potential clients.

Over time, a steady stream of genuine, thoughtful reviews will strengthen your online reputation and increase your visibility in local search results.

Your business’s Name, Address, and Phone number (NAP) are essential pieces of information for Local SEO. The more consistently your NAP details appear across the web, the more trust Google places in your business’s legitimacy.

However, SEO experts suggest that citations are gradually losing their impact as a ranking signal, with behavioral SEO factors steadily taking the spotlight.

Two primary sources for NAP information are the structured data on your website and your Google Business Profile.

The key to effective NAP citations is consistency. Ensure that your business name, address, and phone number are presented in the same format across all platforms. This includes using the exact same symbols, abbreviations, and phone number formats.

Consistent NAP details reinforce Google’s confidence in your business, helping improve your visibility in local search results.

Listing your business on relevant directories is a helpful tactic in Local SEO, allowing Google to verify your business information and making it easier for customers to discover you.

According to BrightLocal’s study, 7% of SEOs consider citations to be an influential factor for ranking in the Local Pack and organic results.

Although the perceived importance of citations has gradually declined, citations are still valuable, especially since many directories rank well in local search results. Being listed on them increases your visibility when people search for related services.

For instance, if I google Italian restaurants los angeles,I get a carousel with the listings from TripAdvisor or Yelp above the Local Pack results.

Follow these best practices for directory listings:

Building quality directory listings with these steps reinforces your Local SEO strategy, helping your business reach a broader audience through reputable channels.

On-page SEO is essential for improving your website’s visibility in both organic search results and Google’s Local Pack. A well-optimized page strengthens your chances of ranking locally and supports overall SEO efforts.

According to BrightLocal, 34% of SEOs consider on-page signals the most critical factor for organic rankings, while 16% see them as key for Local Pack results.

To enhance your on-page SEO, follow these best practices:

You can also use SEO PowerSuite’s WebSite Auditor to assess your on-page elements. With the Content Editor module, you can compare your pages against top-ranking competitors, receiving tailored AI suggestions for keywords, meta tags, and other on-page improvements.

Backlinks are one of the most important signals for ranking on Google, with 31% of SEOs citing them as critical for organic rankings and 13% for Local Pack results, according to BrightLocal’s study.

In fact, Google has previously confirmed backlinks as one of their top three ranking factors. But for local SEO specifically, it’s not just about the quantity of links—it’s about building links from local sources.

Google sees linking websites as part of a network or community. If your backlinks come from local sites, Google recognizes your business as part of that local community, which boosts your relevance in local search results.

On the other hand, backlinks from sites outside your area can still help with general SEO, but they don’t contribute as directly to local rankings.

To find local backlink opportunities, one effective strategy is to analyze your competitors’ backlinks. In SEO SpyGlass, you can use the Link Intersection feature to identify sites that link to multiple local competitors but don’t yet link to you.

These shared links are strong prospects for your outreach, as they’re more likely to be relevant to your niche and open to local placements.

Best practices for building local backlinks:

Building quality local backlinks not only strengthens your position in organic search but also increases your visibility in Google’s Local Pack, helping you connect with nearby customers.

Schema markup is a type of markup that helps Google interpret your page content more effectively. For local SEO, Local Business structured data is particularly useful, as it tags your business’s name, address, and contact details, signaling your relevance to a specific location.

You can use Google’s Structured Data Markup Helper to add this markup easily. Simply submit your URL, highlight relevant information like your business name and address, and download the generated code to add to your website.

If you’re using a CMS, some structured data may be built-in or accessible through plugins—just be mindful of site speed if adding additional plugins.

ChatGPT can be a valuable tool for local SEO, helping you streamline tasks, create optimized content, and manage your online presence more efficiently.

Here are just a few ideas on how you can use ChatGPT for Local SEO:

ChatGPT’s versatile capabilities make it a practical tool to streamline your local SEO, from content creation to customer engagement, helping you reach and connect with a local audience effectively.

After putting in the effort to optimize for a specific location, it’s essential to check whether those efforts have paid off with your local audience. Regular ranking stats, like those in Google Search Console, often show country-wide or even global data, which doesn’t give you insight into your actual local reach.

To get a clear picture of how your pages perform locally, use a rank tracking tool that can simulate search results from a specific location. This feature, available in Rank Tracker, lets you see rankings as if searched from an exact city or neighborhood.

In Rank Tracker, head to Preferences > Preferred Search Engines, then click Add custom beside your search engine.

In the pop-up, select Preferred Location and enter any location that’s recognized by Google Maps. You can set this as precisely as a specific building if needed. Give this location a short name to appear in your Rank Tracker workspaces, then confirm.

Now, you’ll be able to track and view rankings from this exact location.

This setup is especially useful if you serve multiple areas, allowing you to monitor performance across distinct locations and optimize pages accordingly.

Local SEO is about connecting with your community.

By optimizing content, building local backlinks, and tracking precise location-based rankings with tools like Rank Tracker, you’ll ensure your business reaches local searchers effectively. With consistent effort, these strategies build a stronger local presence, attracting more relevant traffic and driving growth.